How the delivery team is using AI and automation at Torchbox

We've seen plenty of posts about delivery managers using AI for routine admin. This isn't that. It's also not a claim that we're doing anything groundbreaking. It's an honest account of the tools and experiments our delivery team have been building, what's clicked, and what hasn't.

For a while, we struggled to see how AI was genuinely relevant to delivery work beyond reviewing emails or drafting meeting notes. That felt like a low bar. Over the past several months, something has shifted. Adoption has increased, and we're finding more creative, practical applications that actually save time and improve the quality of what we hand to clients and teams. Here's where we've got to.

Senior Delivery Manager, Jess, chats with developer, Jakub

Automated sprint status reports

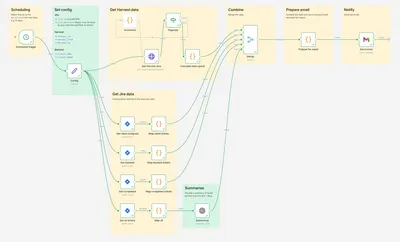

TOOL: N8N + Harvest + Jira

Simon has been building N8N workflows that automatically pull data from Jira and Harvest to generate branded sprint status reports. The workflow looks at what has been completed in the last 14 days, surfaces anything that is blocked by the client, and produces a formatted report ready to share. The template also includes a budget update which is automatically pulled from the Harvest project. Work that used to take time each fortnight now runs on a schedule without anyone having to compile it manually.

N8N workflow

A shared knowledge base for project learnings

TOOL: NotebookLM

Claudia, Anna and Jess have built a shared library of project learnings using NotebookLM, pulling from end-of-project retrospectives. For each project, they create a lightweight context file covering the type of project, sector, team, key challenges, budget and timeline. That data can then be queried when starting something new.

For example: "I'm about to start a replatform project in the charity sector. What risks are likely to arise?" NotebookLM returns a useful starting point for a risk register, drawn from real project experience. Common challenges, like inadequate API documentation, surface early enough to set expectations with the client at the start of the engagement rather than scrambling mid-project.

Improving the sales to delivery handover

TOOL: Custom GPT

Natacha and Jess built a custom GPT to close the information gap that often opens up between sales and delivery. It takes everything from a sales opportunity (the brief, pitch deck, proposal, questions asked, and call transcripts) and automatically populates a crib sheet for the incoming delivery team. Anything that hasn't been confirmed or validated is left blank, flagging exactly what still needs to be asked at handover. It turns a document-hunting exercise into a structured briefing.

Engineering health metrics, straight to Slack

TOOL: N8N + Jira + Slack

Chris built a Jira metrics analysis workflow using N8N that posts an executive summary directly to Slack on a regular cadence. The summary covers key metrics and trend analysis across areas like acceptance criteria adoption, estimation practice maturity, story breakdown discipline and time in progress. Our head of engineering, Chris as head of QA, and I review it routinely as a way of staying across delivery health without digging through dashboards.

Faster project planning and artefact creation

TOOL: Claude (Cowork)

Natacha has been exploring using Claude's Cowork feature within projects to produce project artefacts in a fraction of the usual time. In practice this has covered a lot of ground: building sprint decks, setting goals and planning capacity for sprint planning; synthesising retrospective themes from Miro into actions; creating epics and stories, writing tickets and updating backlogs; and producing project status updates, handover docs and risk registers.

She has also been using it to produce a weekly GLAM (Galleries, Libraries, Archives, and Museums) digest for Slack. Claude has been given context about our clients in the GLAM sector, active projects and strategic priorities, which means the digest surfaces AI news and strategic insights that are actually relevant to our work rather than generic sector noise. It's worth being clear that the digest isn't fully automated: it's curated, edited and manually reviewed before it goes out. The automation handles the heavy lifting of pulling things together; the judgement about what's worth sharing stays with the team.

Generating backlogs from requirements

TOOL: BMAD framework + Python + Jira

A few of us have been experimenting with BMAD, an AI framework for generating epics and user stories from project context. Once the AI has produced the stories, a Python script pushes them automatically to Jira, building out a backlog complete with acceptance criteria written in Gherkin syntax. The output is a starting point for review, not a finished product, but it's a significant head start on what is otherwise one of the more time-consuming parts of building websites.

A QA advisor you can actually query

TOOL: Custom Gemini

Chris built a custom Gemini tool that lets the team ask QA-related questions about specific projects. We've used it to produce draft test plans, and it also provides guidance on appropriate levels of testing based on the nature of the project, the client and the available budget. It brings some consistency to decisions that were previously made on gut feel or experience alone.

Gemini screenshot

Where we've got to

A year ago, it was hard to see how AI fit into day-to-day delivery work in ways that felt genuinely useful rather than performative. That's changed. The experiments above represent a team that has moved past the novelty phase and is landing on tools and workflows that have real operational value.

A few things have become clear from doing this, beyond the individual tools themselves. Almost everything above happened because someone had capacity to try something new, which raises a question about how sustainable experimentation is when it tends to happen in the gaps. We've also noticed that the ideas which have travelled furthest tend to involve more than one person, and there's probably something in being more deliberate about that rather than leaving it to individuals working alone.

The thing we haven't cracked yet is moving from proof of concept to something operational. We're reasonably quick at getting a working prototype together, but committing to rolling something out, when there are real questions about the quality and reliability of the output, is a different challenge. The harder work now is figuring out how to take the best of what we've built and actually embed it, consistently and confidently, into how we work day to day.

We're approaching this the same way we'd approach it for a client. That means agreeing quality thresholds before anything moves into regular use, assigning a clear owner to each tool so there's someone accountable for monitoring and iterating on it, and building in a structured review period before we consider something genuinely embedded. We're also being honest with ourselves about the difference between something that works and something we trust enough to rely on.

Myself and Lisa chatting at a recent company get together

What are you experimenting with?

We're sharing this not because we've figured it all out, but because we found it genuinely useful to see what others were doing when we were still finding our feet. If you're working on something interesting, whether it's a workflow, a tool, a failed experiment, or even just an idea you haven't tried yet, we'd love to hear about it.